Note: This article was originally published on Librato, which has since been merged with SolarWinds®AppOptics™. Learn how to get started with monitoring CherryPy performance using AppOptics.

Note: This article was originally published on Librato, which has since been merged with SolarWinds®AppOptics™. Learn how to get started with monitoring CherryPy performance using AppOptics.

We are pleased to extend our ability to monitor popular Python web frameworks by adding support for CherryPy, a minimalistic and object-oriented web framework. Much like Django and Flask, you can now monitor your CherryPy web application’s health and performance in a matter of minutes, with zero modifications to your application code.

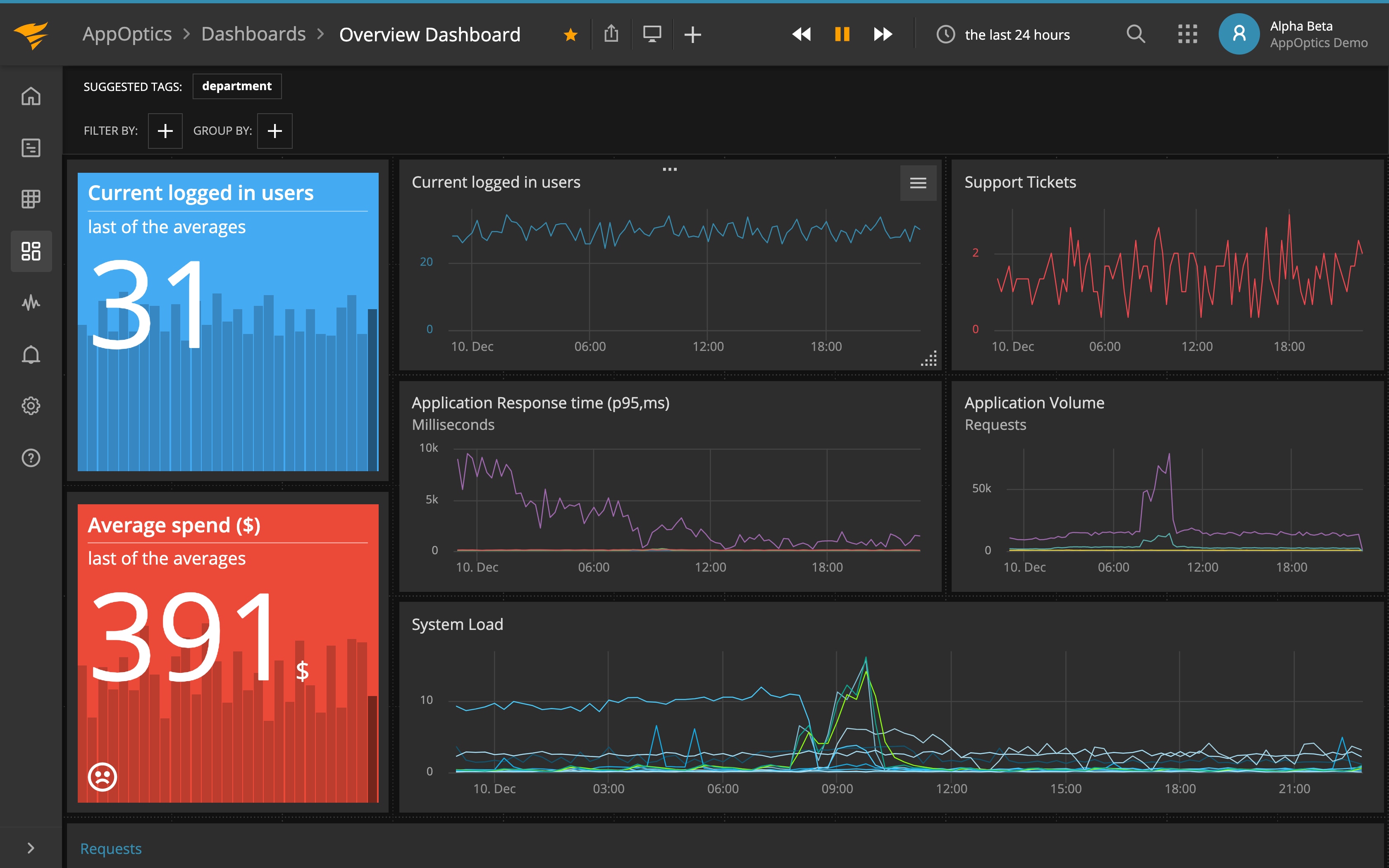

If you are new to SolarWinds Librato™, check out our instructions on getting started. Otherwise, sign in to your Librato account, create a CherryPy integration, and follow the simple instructions to install our binding agent and configure and (re)launch your web application. Within a few minutes, you’ll begin to see metrics in your new curated CherryPy dashboard.

Features

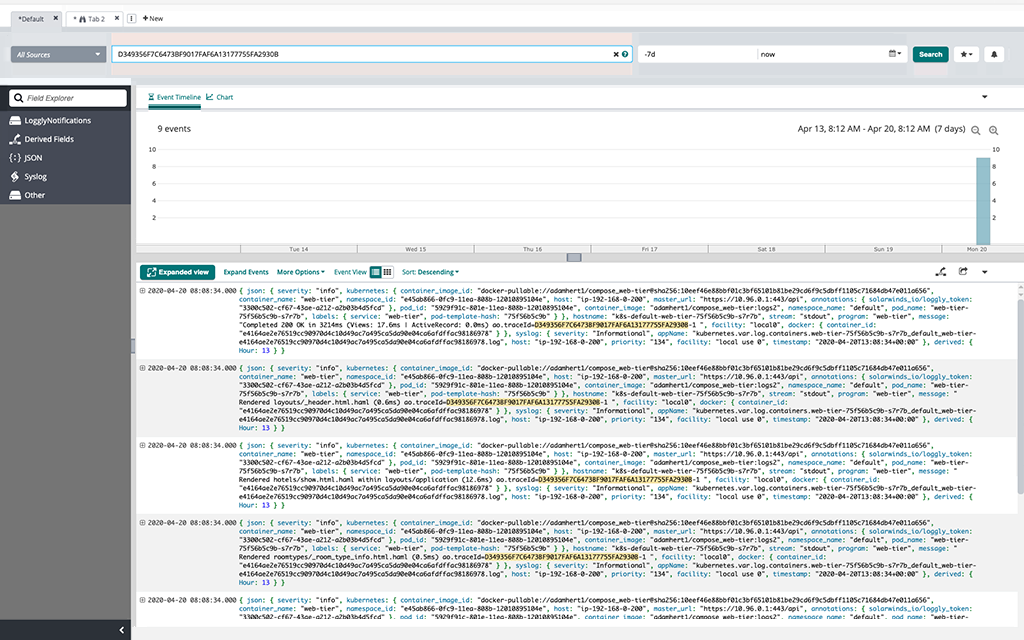

Our instrumentation reports over 80 metrics related to the performance and health of your CherryPy web application. It’s easy to fine-tune the reported metrics using the provided configuration file.

-

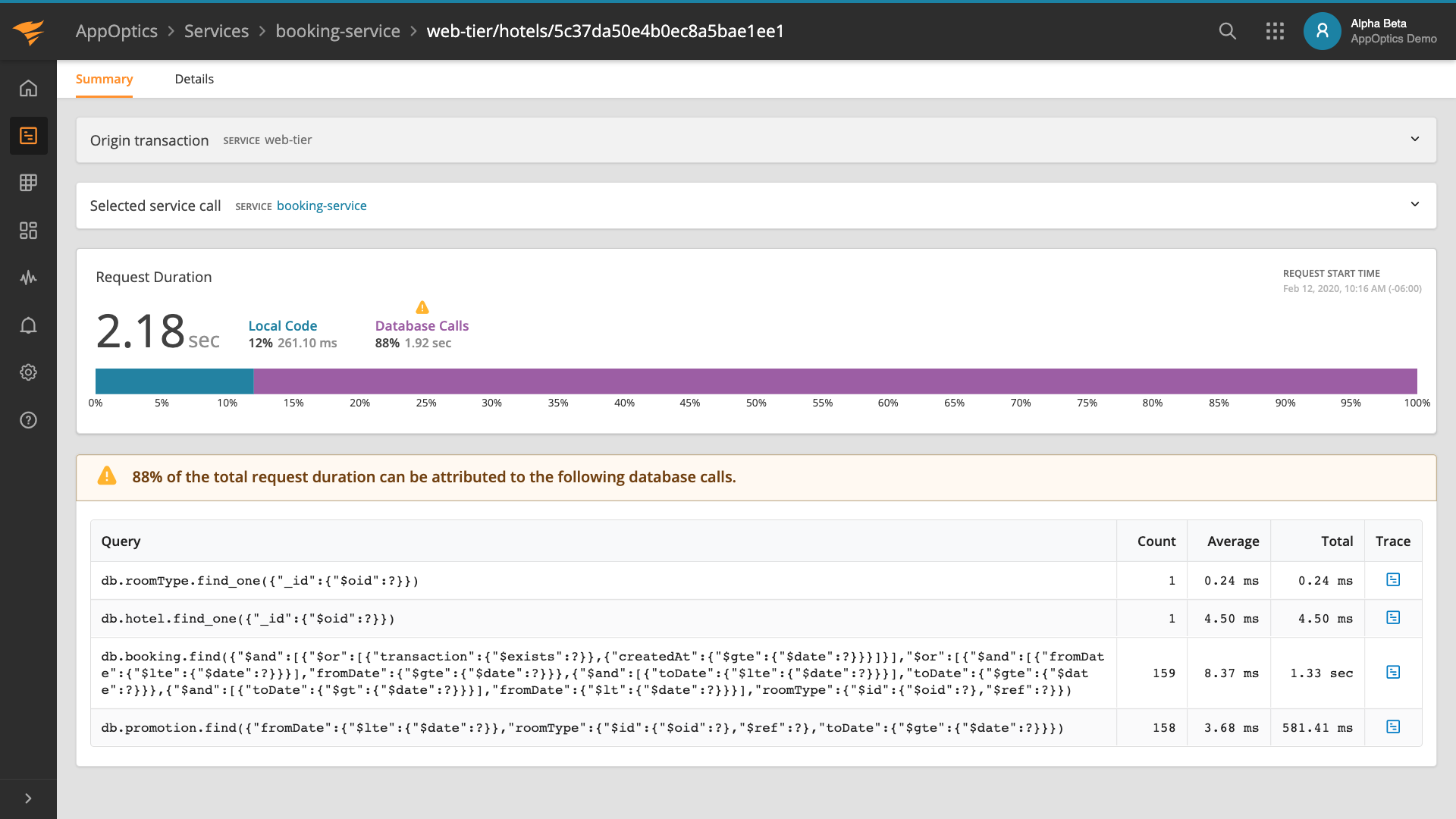

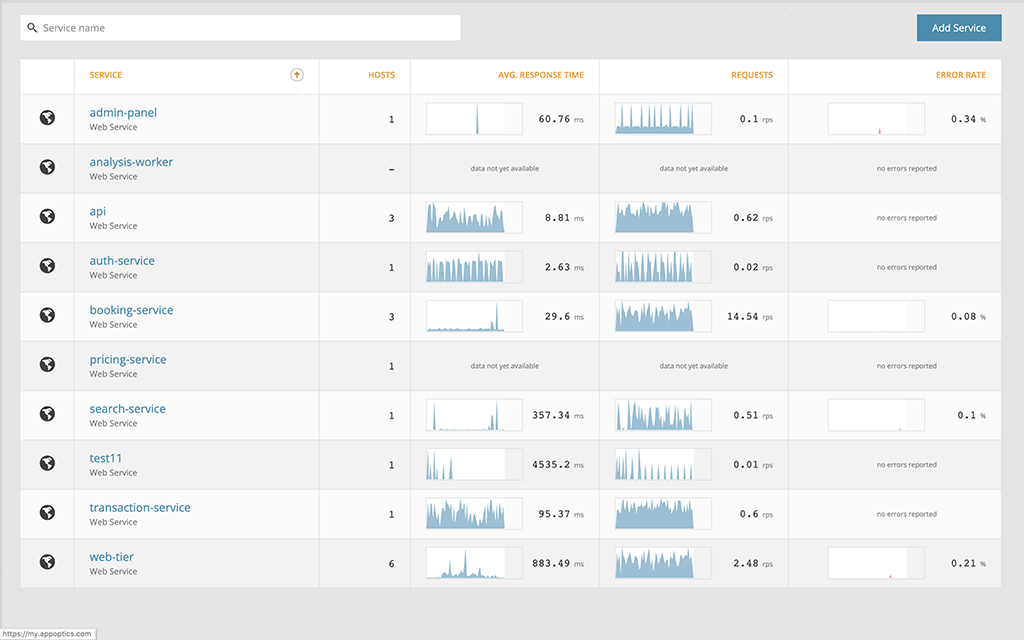

Current and historical average web response times, including visibility into model layer latency, WSGI application layer overhead, time spent accessing external services, and in the application code itself.

-

Overall throughput, broken down by HTTP status codes.

-

Error request percentages.

-

Log volume for critical, error, and warning messages, achieved by instrumenting the standard logging module (a variation in the log volume can often signal anomalous behavior).

System Requirements

You’ll need a recent stable version of CherryPy running Python 2.7 or 3.

How It Works

Our instrumentation is designed to work with no code modifications to your application.

-

Installing our pip package gives you the ability to transparently wrap the appropriate CherryPy and Python classes and report useful measurements to Librato. However, the instrumentation doesn’t kick in unless you run it using the launcher utility (see below).

-

Running your application using our launcher utility enables the instrumentation, which ultimately reports the metrics for the app.

-

The launcher also spins up a bundled StatsD process, which aggregates and reports measurements to Librato.

-

Metrics are reported to Librato asynchronously, in order to reduce the performance impact on executing web requests.

Optionally, you can create a Python virtual environment to isolate the instrumentation to your CherryPy web application.

More Information

Check out our Knowledge Base article for step-by-step details on configuring and running CherryPy web applications. If you’d like to contribute features or bug fixes, please create a pull request on the librato-python-web GitHub project.